Google Search starts seeing and listening live

New Gemini language model

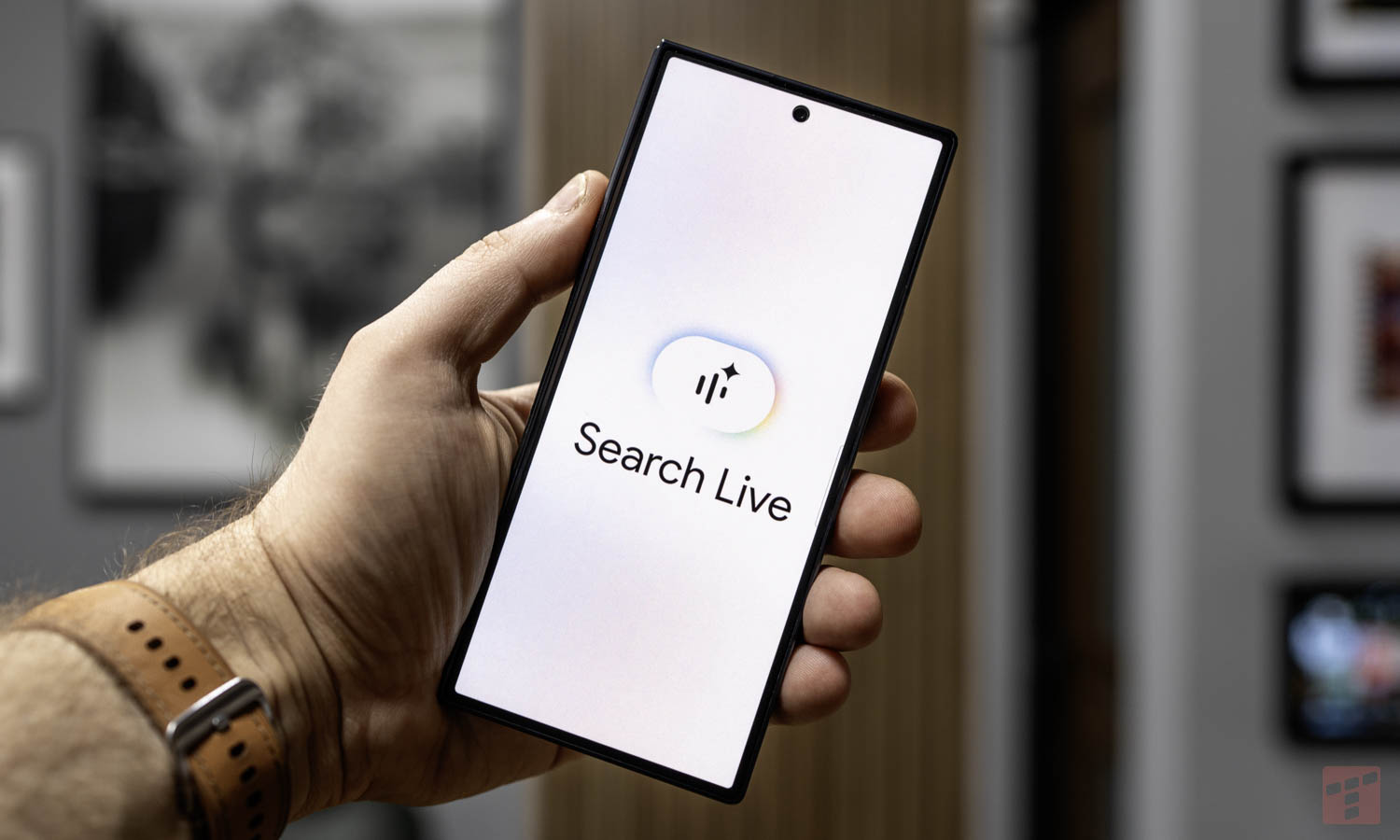

The American company is implementing a Live search engine (Search Live) for all people using AI Mode. The new solution is based on the Gemini 3.1 Flash Live voice model. The creators programmed it from the beginning to support multiple languages. This ensures high speed and smooth conversation flow. Users from all over the world can communicate with the system in their preferred language.

This function was created for situations requiring immediate response. Traditionally typing queries on the keyboard takes time and may be inconvenient. To take advantage of the new features, open the Google app on your Android or iOS device. There is a new Live icon below the main search bar.

The smartphone’s camera analyzes the surroundings

After activating this mode, the user asks a question aloud. The search engine processes the query and generates a response. You can then ask follow-up questions and continue the exchange. The system also suggests links to pages expanding on a given topic. This is intended to be useful for more complex problems.

Live Search works directly with your smartphone’s camera. The tool analyzes and recognizes physical objects in the environment. This option helps, for example, when DIYing at home or assembling furniture. The application records the image from the camera and provides directions based on it. This mechanism resembles a classic video call.

Integration with Google Lens

You can access Search Live directly from Google Lens. The appropriate button is located at the bottom of the application interface. Camera image sharing then starts by default. This allows you to start a discussion about the objects visible in the phone frame.

The manufacturer declares further development of these tools in the coming months.